How Business Analysts can enable and participate in Test Driven Development

— Testing, Software Development — 10 min read

This post is based on a presentation I gave at a BA meetup at work but I felt it was an interesting enough subject to share my thoughts with the wider community.

What is Test Driven Development?

Test Driven Development (TDD) is a software development process that promotes the verification of the system’s behaviour from being carried out after the system change has been implemented to being part of the development process.

TDD is not a new concept and has been around at least since the days of Extreme Programming (XP) in the late 90’s but as agile development evolved, the more widely adopted frameworks weren’t as prescriptive as XP was on how code should be written and this has led teams to ‘rediscover’ the practice as they go through their agile journey.

At it’s core, TDD is about feedback. The tests give feedback about if the system is still working as expected but also the team will be giving feedback about how testable the system is during story refinement and how to increase testability in order to make TDD easier to do.

Your tests will also help document the system, by writing a test of the intended behaviour of the system you’re not only helping the developer building that functionality but helping those who will work on that code (and the system) in the future.

With a comprehensive definition of how the system should behave you give yourself a safety net against unintended side effects that arise when changes are made at a code, configuration or infrastructural level and a quicker feedback loop for finding defects.

By exploring how the changes to the system will be tested during story refinement you have the flexibility to identify and define edge case behaviours before the change has been implemented and potential re-work needs to happen.

TDD isn’t just a ‘Dev thing’

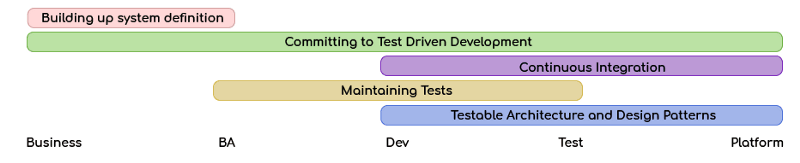

TDD is a lot more than a development writing a set of tests for the code they’re going to build and needs the entire team to participate;

- TDD requires a high level of definition around the system’s behaviours so those defining the system need to see the value in building up that level of definition

- TDD can feel like things take longer to develop (although in the long run it actually saves time by preventing re-work) so those managing delivery expectations need to see the value in the practice in order to protect it from pressures to deliver faster

- TDD works best when there are efficient feedback loops. Continuous Integration (CI — another concept from XP) is a good way of doing this but setting up a CI pipeline may require prioritisation from external teams if developers aren’t able to set this up themselves

- TDD requires discipline within the delivery team and needs everyone working on the code to see the value in the practice so they maintain the test suite instead of seeing it as a hinderance

- TDD is enabled by testable architecture. If architectural solutions aren’t designed to make testing easy then it will be harder to verify the behaviour of each architectural component and the system as a whole

- TDD pushes testing ‘left’ and won’t work well if the test team aren’t willing to participate in the process due to contractual arrangements, politics or emotions getting in the way of collaboration

How Business Analysts can help make Test Driven Development more valuable

Business Analysts are in a perfect position to help teams practicing TDD, due to closeness to both the business and the delivery team.

Traceability

Tools like Allure, Zephyr and X-Ray allow test cases to be linked to user stories which allows for bi-directional traceability between the tests being run as part of TDD and the user stories that capture the changes to the system’s behaviour. Bi-directional traceability enables the team to quickly find the tests that cover a story and makes it easier to identify the code and tests that will need removing, updating or creating during the implementation phase.

Knowing what tests cover a story allows the team to build up a test coverage metric that can be used to identify stories with low test coverage. Areas of the code base with low test coverage are riskier to work in (due to there being potential unknown behaviour) so the team can use this knowledge when estimating and raise technical debt to increase coverage when working in that area of the codebase.

Living Documentation

Living Documentation comes from BDD and is an executable definition of the system’s behaviour that is written in language understandable by the business and executed by the delivery team, in order to build a ‘living’ document of how the implemented system behaves.

When building Living Documentation, teams will often use the scenarios in the Living Documentation as acceptance criteria for a story but if the Business Analysts doesn’t define the scenarios fully (I’ve been in teams where the BA writes the scenario title but leaves the actual scenario wording to the test team) then they will be disconnected from that scenario and this lessens the value that the business gets from Living Documentation as it’s not defined in a language they can easily understand.

How Test Driven Development can provide value to Business Analysts

When a team practises TDD their BA will have access to more information about the system and how it’s been implemented.

Better knowledge of the team and the system

Tests are a great source of documentation of the system and if there’s a high level of traceability between tests and stories then a BA can use these tests to build up a good understanding of the system’s functionality and use this increased understanding to ask more targeted questions and capture risks that could otherwise be missed.

If a team is practicing TDD then a BA can pay attention to the types of defects being captured during development and after release as these will highlight the areas being missed during refinement and they can prompt the team to think about these areas when refining future work.

Get feedback sooner

If the team has a mature Living Documentation setup (one with a lot of well defined, parameterised steps) then the BA should be able to create new scenarios or update existing scenarios, trigger a test execution of that scenario and get feedback on how the much of the newly defined behaviour is supported already by the system and this can be done independently of the rest of the team.

Bi-directional traceability allows for test results to be visible from the user story and depending on the tooling used these results can be bubbled up and aggregated to help visualise the status of the implemented functionality at an epic, backlog, sprint or slice level.

Practicing Test Driven Development

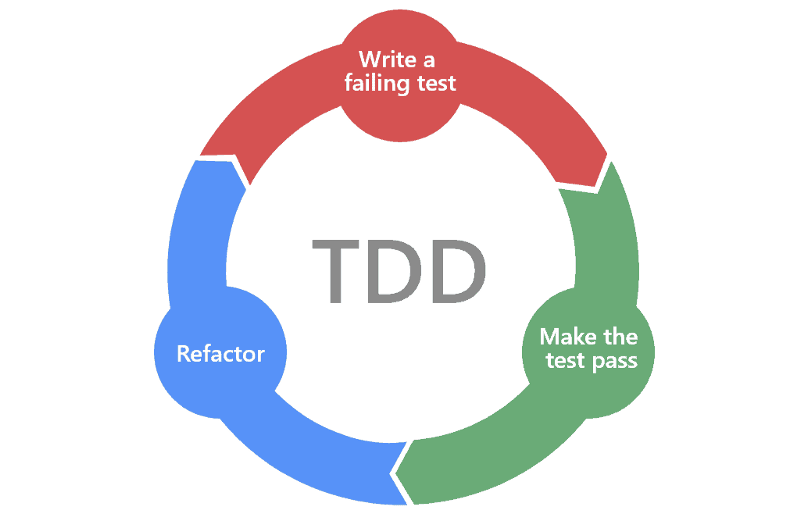

As mentioned previously feedback is at the core of TDD and this is embodied by one of TDD’s most important concepts — the red, green, refactor cycle.

- Red — You write a new test or update an existing test that verifies the intended behaviour of the system but that test fails because the system doesn’t current behave that way

- Green — You change the system’s behaviour to work as intended and that test now passes because the system behaves as expected

- Refactor — You can now use that test to ensure that the system continues to behave as expected while you change the underlying implementation to make it easier to maintain and understand

The red, green, refactor cycle can be applied to any test level. Although it’s easiest to work with at the unit test level as they require smaller changes, you can use feature flags as a way of maintaining existing behaviours while also being able to enable new behaviours when testing at higher levels (such as system testing) for behaviours that span multiple stories and changes.

TDD by example

To help illustrate how each member of the team is equally important in making TDD work here’s an example of what it takes to implement a story when practicing TDD.

The business need

ACME corp have a website that sells products to cayotes who want to capture or maim their rival, the roadrunner. Their top selling product is a dynamite that when it’s fuse is lit, explodes after a certain length of time.

Their customers have asked if there’s a means to remotely detonate the dynamite as they keeping having the dynamite blow up in their faces. ACME corp has seen a gap in the market for this new means of detonating the dynamite and want to list this on their website, allowing customers to pick the method of detonation on the product page.

Research is carried out by marketing and UX to figure out what approach to letting customers pick a detonation method will result in a higher conversion rate and suggest a selectable dropdown on the product page.

The team’s BA works with the business to capture the requirements and additional information into a user story and adds that to the team’s backlog

Story refinement

The delivery team holds a three amigos session that involves the BA, a developer and tester to refine the stories on the backlog and the new dynamite detonation story is selected for refinement.

The developer identifies that the product display page will need updating to support selecting a detonation method via a dropdown as the existing page only supports one product variant.

The developer documents the area of the codebase that the story would require changing and identifies a test that needs updating to verify that no dropdown is shown when a product has one variant.

The developer and tester identify a new test that checks that products with two or more variants show a dropdown to allow the customer to select which variant they want to buy.

The team are happy the story meets their definition of ready and the story is added to the refined story backlog so the team can work on it in the future.

Writing the failing test

The story is picked up by the team to work on and the developer reads the notes on the ticket to gain an understanding of the areas the story requires changes in.

The developer updates the existing test (test 1) highlighted on the ticket to add a check that no dropdown is shown when the product has only one variant and a new test (test 2) to check that a dropdown is shown when the product has two or more variants.

The developer runs the tests, the new test fails because the system doesn’t behave that way yet but the existing test still passes as that behaviour already exists.

Making the test pass and refactoring

The developer builds the dropdown UI element and adds it to the product display page. The developer re-runs the tests and the new test (test 2) passes because the system now behaves as expected but the existing test (test 1) fails because the logic that was implemented had the dropdown visible, regardless of how many variants the product had.

The developer fixes the dropdown display logic so it only shows when there are two or more variants and they re-run the tests again, this time all tests pass.

The developer reviews their code and spots a couple of places they could improve, they use the tests to refactor this code safely as they can catch any regressions in behaviour.

The developer pushes their code up for review and further testing.

Continuous Integration

The continuous integration (CI) pipeline is triggered by the developer’s push of their code and runs the full test suite to ensure that the changes pushed up will still pass if those changes are merged into the main branch.

The CI breaks! It turns out another developer made a change in the main branch that isn’t compatible with the changes the developer made. The developer identifies the area of their code that caused the unintended change in behaviour and updates it so that the tests all pass again.

The developer pushes up this new code and the CI test run passes.

Code Review

The developer’s peers review the code they’ve written and identify a few areas that could be rewritten to better fit in with the design patterns used in the codebase.

The developer updates their code to follow those design patterns and because of the added testability those design patterns allow they add a few more unit tests to further check the logic.

The developer runs the tests after refactoring to ensure that there’s been no regressions and pushes their code up for review again. The CI pipeline is triggered after the new push and runs the tests which all pass again.

The developer’s peers are happy with the changes and approve of them being merged into the main branch.

Testing

The testers work with the developer to understand the implementation details of the new changes and build up a test charter of areas they’ll explore.

During testing a couple of bugs are found and the testers and developer work together to create a set of tests to reproduce the state that causes the defective behaviour. The developer adds the new tests and runs the test suite to ensure those tests fail thus reproducing the bug within the test suite.

The developer fixes the defective behaviour and runs the tests again to ensure all tests pass. The developer pushes up the fixed code, the CI runs the tests and the testers run another round of exploratory testing.

Acceptance Testing

After the team are happy that the story has been built they then make an instance of the updated website available to the business to carry out a review of the changes.

The business reviews the build and decides they don’t like the colour the developer uses for the dropdown. The developer updates the dropdown colour and runs the tests again to verify that everything is still working before redeploying the acceptance testing instance.

The business is happy with the new functionality, the developer’s latest changes are reviewed and on approval the developer’s code is merged into the main branch of the codebase.

The developer’s code is deployed into production and the new remotely detonated dynamite variant is made available to the business’ customers. A cayote buys the new dynamite variant and the plunger blows up in his face instead of the dynamite.

Common anti-patterns

TDD requires discipline and sometimes it’s easy for the team to fall into bad habits, here’s some of the ways I’ve seen TDD misunderstood.

- Implementing the changes before writing tests to cover the change being made. I like to call this Development Driven Testing (DDT) as it’s likely the tests written will be tied heavily to the implementation instead of the intended system behaviour which makes refactoring that code harder

- Bug Driven Development (the other BDD) should hopefully involve writing tests to ensure that defects don’t happen again but because the system’s behaviour is being defined after it’s tested or worse, been released, this approach is the opposite of TDD’s ideals

- TDD isn’t just testing at a unit level, it’s common for developers to see TDD as being limited to certain types of testing because that’s what they use for immediate feedback but TDD covers all levels of testing that are applicable to verify the intended behaviour

Summary

While Test Driven Development might seem like a development only activity it actually requires participation from all members on the team. Without the business and development team working together the development team will struggle to have a good enough definition of the system to be able to write tests and the business won’t be able to benefit from the quicker feedback loop that TDD will bring.

Business Analysts are in a unique position to provide value to both parties when TDD is being practiced as they sit across that business/delivery team line. They can also get a lot of value from the TDD process themselves by utilising techniques like bi-directional traceability and living documentation to create a business facing feedback loop for how the system is behaving during development.