Mutation Testing with Stryker

— JavaScript, Testing, Automation, Software Development — 3 min read

When I was working in my previous team we had a problem.

We had a fair number of unit tests and coverage was relatively high but we’d keep getting bugs in functions for behaviours that appeared to be tested.

This made it hard for the team to diagnose the issue with our process and we had a lot of rework due to having to find the buggy line of code and write more tests to ensure there was no regressions.

It was clear however that the issue was with the assertions we were making in our unit tests, they were not precise enough and this led to bugs.

Take the following example:

1import Foo from 'module';2

3const bar = (foo) => {4 if (typeof (foo) === 'undefined') {5 throw new Error('Foo needs to be defined stupid!');6 }7 return new Foo(foo);8}9

10describe('bar', () => {11 it('Throws an error when foo isn\'t defined', () => {12 expect(() => bar()).toThrow();13 });14 it('returns a new instance of Foo', () => {15 const foo = bar('foo');16 expect(foo instanceOf Foo).toBe(true);17 });18});If you run the test it’ll pass as foo isn’t defined, however it will also pass if otherFunction is undefined (for instance if there’s an error importing it).

Instead of just asserting an exception was thrown we should also be checking the type and message sent.

How do you find vague unit tests?

Mutation testing is a tool that can help solve the issue we had with vague assertions, it mutates the code under test and reports on whether the test still passed even though the code is no longer behaving as expected.

In the case of the above example it would change the message provided when creating the Error from Foo needs to be defined stupid! to '' and if the test still passed due to a vague assertion it would raise this.

There are a number of frameworks available for mutation testing but the framework I’ve been using recently is Stryker which is available for JavaScript, Scala and C#.

Stryker supports a number of mutator types for JavaScript:

- Arithmetic operator — Changes

*to% - Array Declaration — Changes

[1,2,3]to[] - Block Statement — Changes a function block to just an empty function

- Boolean Literal — Changes

truetofalse - Conditional Expression — Changes conditionals in statements like

ifto betrueorfalse - Equality Operator — Changes

<to be<= - Logical Operator — Changes

&&to be|| - String Literal — Changes

"Example String"to be"" - Unary Operator — Changes

+ato be-a - Update Operator — Changes

a++to bea--

A simple function like the example above will use around 20 of these combinations.

What does mutation testing mean for quality?

I like to view mutation testing as a form of automated unit test review.

Mutation testing will not check that your unit tests are ensuring the code under test does the correct thing, but it ensures that your assertions are precise enough to catch any deviations in behaviour.

By ensuring your unit test assertions are precise, mutation testing builds quality into the code base and in the long term teaches developers how to write better unit test assertions.

Mutation testing is, however, a very time consuming process as you’re generating a lot of permutations of the function under test (the example function generates 20) so it’s not a lightweight tool to be run on every commit but more on merge requests or periodically on a stable branch to ensure no vague unit tests creep in unexpectedly.

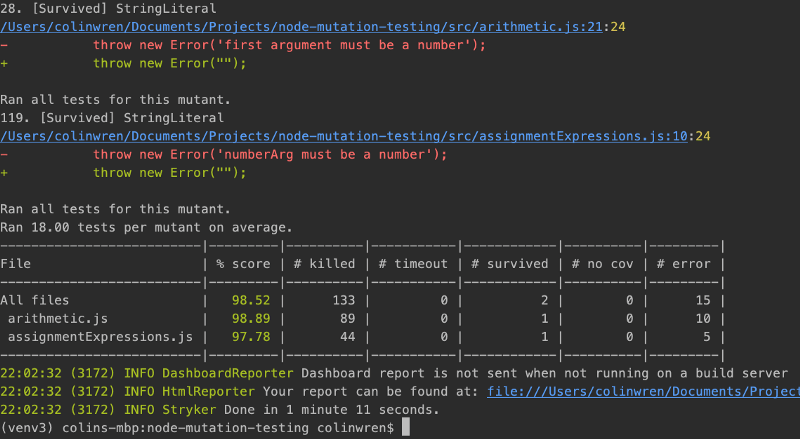

Mutation testing reports and what they mean

The reporting for each mutation testing framework will differ but I’ll explain the general reporting structure you’ll see and how to interpret the results.

There are two metrics that are important to understanding how effective your unit tests are:

- Killed mutant count — You want this to be high, it’s the number of times your unit tests didn’t pass when the code under test was mutated

- Survived mutant count — You want this to

0, it’s the number of unit tests that still passed even though the code under test was no longer doing what was expected

There is also a runtime error metric which is the number of times the mutation on the code under test resulted in a runtime error. A combination of the runtime error metric and the killed mutant count will indicate how well your unit tests perform.

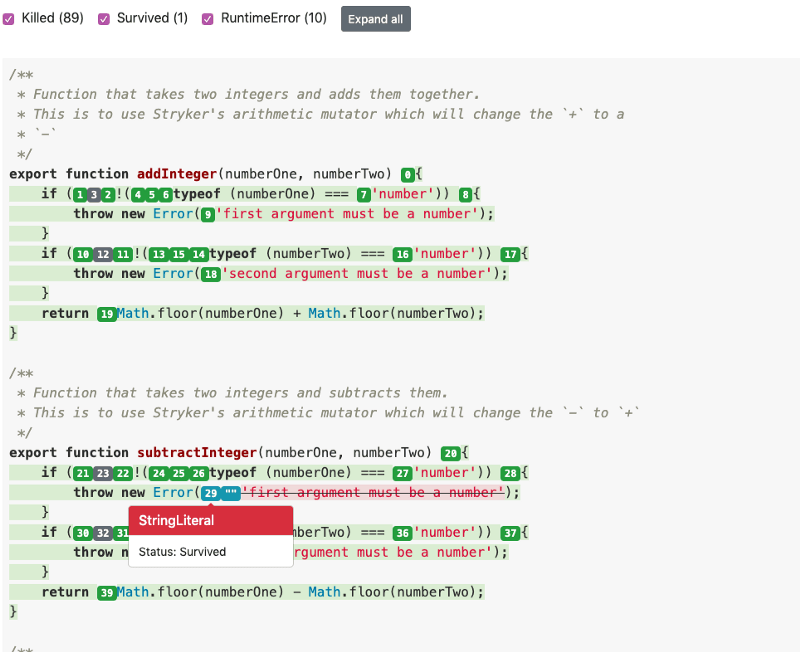

Most tools will also give you a webpage which annotates the code under test with the mutations generated and if the mutation survived or was killed.

In the above screen shot you can see the outcome of using a vague assertion such as toThrow() instead of toThrow('first argument must be a number') , the string literal mutant that replaced the error message with an empty string survived.

Try it out yourself

The Stryker website has a really good example project I’d recommend you download and experiment with as it illustrates how 100% test coverage doesn’t mean that your tests are catching bugs.