Tutorial: How we built the Reciprocal.dev alpha

— Product Development, Lessons Learned — 17 min read

This post was originally published over on the Reciprocal.dev blog but as this is a personal story about my journey as much as it is the business’ I decided to post it here too.

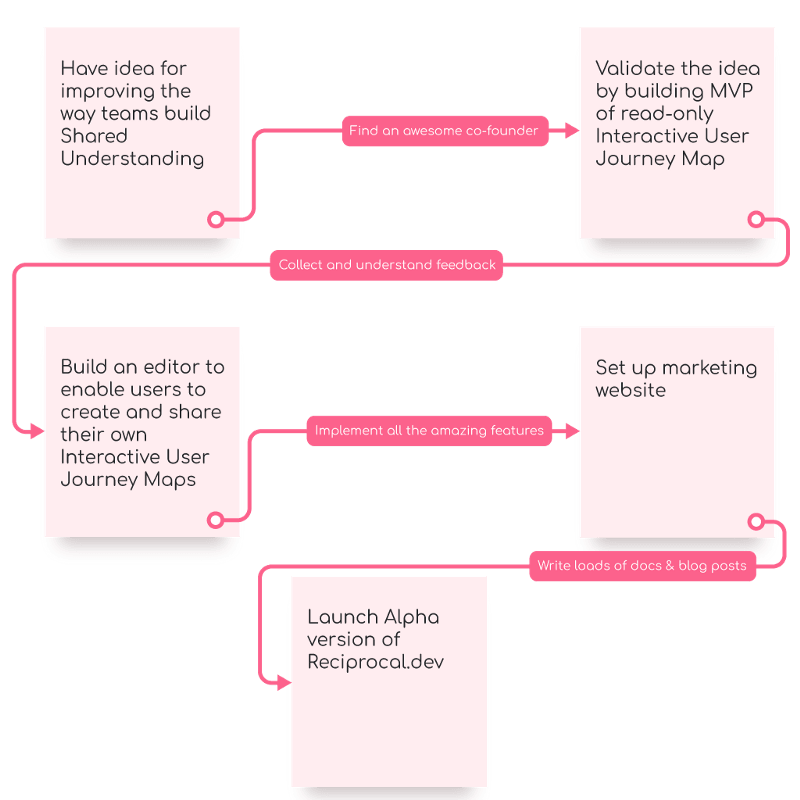

It’s been around 8 months since myself and my co-founder Kev started on our journey building Reciprocal.dev and as we’ve just launched our alpha I thought it would be fun to talk about what led to us banding together and the path we’ve taken getting to alpha.

Before starting reciprocal.dev, Kev and I had worked together in a team at our day job. The team were struggling to understand the work we were being asked to do so we tried a new approach by building mockups and working with the business closely to create a resource to build shared understanding within the team, so we all knew what we were trying to achieve.

These mockups worked really well but around six months later the team were thrown a different problem when because of the COVID-19 pandemic the UK went into a series of lockdowns and had to adjust to working remotely and those mockups moved from paper to diagrams on a Miro board.

Touching the stove

I’m going to go on a little bit of a tangent as this is important to the overall reciprocal.dev story but might seem removed.

Whilst pre-covid, I would spend a lot of time socialising, during the lockdown I found myself at home with nothing more to do than play Animal Crossing but that can only keep you occupied for a certain amount of time, so I took my first steps into entrepreneurship.

I’d been working on an idea for a CV writing app on-and-off for the last 4 years and never had the confidence to try and execute it, but after building apps for free as a volunteer for a community organisation around a game I played and not really getting much out of it I decided being stuck at home was a good excuse to try and execute my idea.

I gathered a couple of like-minded people I knew to support with running a business and set about working on the app, building mockups and carrying out usability testing with friends and was encouraged when the initial feedback was overwhelmingly positive.

With this wave of positive energy I then dove into coding, working up to 10 hours a day for around 3 months, building the app and ensuring that I followed all the best development practises that I use in my day job, before finally launching the app in the new year.

When it was then time to reap the fruits of all that labour, we published the app to the stores in January in order to captialise on those looking to find a new job as part of their new year resolutions and in the first month we made £14. The next month we made £7.

Needless to say I was disheartened and I was also really burned out.

I took a couple of weeks to rest up before approaching the app again and then took some more weeks off. During those weeks I looked into what I had done wrong and realised the biggest mistakes I took were: not continually getting feedback and getting feedback from my target customer instead of my friends as I had assumed that the initial conversations were enough to validate the idea.

I also realised that while I had originally had the idea for a CV writing app because I had found myself having to distill 3 years of working for a startup after it had gone under in 2016, I wasn’t actually passionate about CVs or recruitment.

What I was passionate about was test reporting and building shared understanding. Through talking to Kev about some of the issues we were facing with using Miro and Jira to build shared understanding, I came to realise improving this was where my heart really was.

The issues we had with Miro and Jira were:

- Miro doesn’t allow for easy comparison of different versions of diagrams such as comparing a process in its current implementation and a future implementation

- Miro doesn’t allow for dynamic differences to be applied to a subset of a diagram to help visualise a variance in a process such as those created by different configuration options being used for instance across a set of different regions because of legal obligations

- Miro has very limited interactivity when it comes to annotating and dissecting diagrams. Any means of highlighting a specific set of objects within a diagram needs to be created manually instead using data to drive this

- Jira is too temporal, it’s based around moving work through a pipeline and not documenting the system’s behaviour or the evolution of a system. Most teams see Jira as the source of truth but if that truth doesn’t get updated and disappears from the team’s immediate reach then it’s not very useful

At the time I had already been playing around with an idea for a Miro plugin to annotate screens and link those annotations to test cases as a means to visualise test coverage, so I was aware of some of the limitations that Miro had from an API point of view and the fact that there was no means to monetise a Miro plugin (you have to build a paid-for service and provide the plugin for free).

So with Miro not meeting our needs we decided to pair up and build our own tool.

Learning not to touch the stove again

Launching my first app was a massive learning experience but seeing that app struggle to make an impact was an even bigger one and I was keen to apply that new learning to reciprocal.dev, focusing on learning about what we wanted to build before jumping into a lengthy development period and Kev’s past experiences running user research sessions really helped make this approach a reality.

We kicked off our ideation for reciprocal.dev by reviewing our list of Miro’s short-comings and delving into some of the root causes of this. We came to the conclusion that Miro is more of an ideation tool than a source of truth, with most teams using it to define the problem before abandoning that representation of the information and converting it into something more structured like Jira tickets, which was what the team would then use to build shared understanding.

We then looked into our experiences in ‘agile’ teams and how that shared understanding building exercise tended to go, either via screens pasted into the ticket, a conversation with someone or even an interactive mockup and bi-directional traceability for tests.

We came to the conclusion that the temporal nature of both Miro and Jira were blockers to enabling teams to build shared understaning effectively. These artifacts are either disposed, not updated or in Jira’s case effectively disappear once the work is done and when you want to use them to drive discussion or learn more about the needs of the end user. There’s no way to tie them all together in order to form a bigger picture while retaining that focus on the specific part of the product being worked on.

The opposite of this approach would be a user journey map that would act as a source of truth for not just how the product currently functions but also how it functioned in previous versions and how it will function in future versions and it would do this at different abstraction levels.

The user journey map would be able to display changes introduced by a companys need to cater for different user bases, be it via A/B testing or something more permanent such as regional requirements, and it would be able to do this against the different versions.

The user journey map would have a means to allow teams to focus in on specific areas while retaining the larger map in order to allow teams to explore the micro and macro at the same time and it would allow teams to annotate it with information that would otherwise sit in silos so that data can be used to help inform discussions.

With this definition of what we wanted to achieve I pulled together some rough screens that showed the concept and we started talking to people we knew in different roles across a range of product-based teams. This was the first application of my learning from the CV app — target your feedback collection to those who might actually use your product and do not lead the discussion with the product, instead discuss topics around what the problems your looking to solve before using the product as an illustration of a possible solution.

The initial feedback was mostly positive with people really getting really excited when I showed them the highlighted user journeys against the full user journey map and how they struggled within their team to ‘see the forest for the trees’ when architects created a map the size of six A0 sheets to visualise the entire system they worked on.

The only potentially negative feedback we received was about integrations. People didn’t want to take a chance on a new tool if there was no means to hook it up to all the other tools they used in order to import and sync that user journey map data with those tools. We didn’t see this as a true negative though and viewed it as more of a user need expressed as a negative.

Creating our MVP but like, a proper MVP

The initial feedback was collected using a set of images and people that we were able to reach out to but we now wanted to collect further feedback from those we didn’t know. This meant building something that people could play with in order to learn about the concepts and asking them to give us feedback over email.

We decided to focus on building a read-only version of the map, as this would be most people’s interaction with the product and being able to focus on just displaying things instead of how to create, edit and delete them would cut the development time down considerably.

After learning of the limitations of the Miro API as a means to build the tool I started looking at frameworks that would enable me to build something similar so it was around this time that I settled on which framework I was going to use. I picked Konva as it had a lot of high quality documentation & examples, an active community and support for multiple JavaScript frameworks.

With that technical decision made it was time to start turning those images I’d created previously into an actual webapp. This gave me an excuse to apply another lesson from the CV app and instead of fixating on setting up CI, hitting 100% code coverage and building rigid data structures I hacked together the functionality, exploring how to tackle the problems I needed to solve as I went and demoed the app to those who’d given that initial feedback as I went.

This was a really fun development cycle and I found myself sometimes implementing a new feature or rewriting part of the system in the space of a few hours, thanks mostly due to my enthusiasm to learn more from potential users and the fact that I knew exactly what I was trying to achieve (shared understanding).

Within a month I’d built an example app that would render a user journey map for a fictional ecommerce app called ‘Chocolatebox’, that map had:

- Two versions to show how the tool could show differences in steps, connections, user journeys and test cases based on which version was active

- Two device type variants to show how the tool could show different screens across versions and how the same user journey could follow different paths based on differences caused by device type

- Two region variants to show the tool could show different screen designs across versions based on the territory the app was used in, we used a UK & US version to show differences in currency and address input. As a joke I had added an Australian version too that flipped the screens upside down, but we discarded this

- An A/B test example to show how the tool could be used to visualise the impact of enabling experimental features across different versions

With the MVP built we created a basic landing page explaining what the tool had to offer and links to the example map. Both the example map and the landing page had call-to-actions (CTAs) for the user to register their interest for Reciprocal.dev which we’d then use to conduct the feedback gathering sessions.

MVP feedback

While we had set up a means to collect feedback from people who found the website while searching the internet I also took the MVP to those who’d given feedback previously as a means to understand which concepts worked well, which needed UX improvements and which were not worth persuing.

The MVP feedback was more productive and forthcoming than the feedback we got from just showing images as people had a more tangible thing to play with and explore. I reworked some of the UI elements and interactions as a result and about a month after launching the MVP we had a solid idea of what worked.

We were a little thrown off by the number of feature requests that came in as part of gathering the feedback as the more tangible nature of the MVP meant that people started to see how the concepts could be used to solve other problems they had. These ranged from simple things like changing fonts to adding multi-dimensional mapping to visualise changes across different abstraction levels.

During that month we also had a number of people sign up to the reciprocal.dev mailing list and a number of really encouraging emails from people asking us how they could create an account and start building maps straight away.

Based on the analytics I had set up around 1 in 11 visitors to the website registered their interest and 80% of those who viewed the MVP completed at least one goal relating to toggling the user journeys and/or test cases in a session.

We decided that this high conversion rate and many feature requests meant we were most likely onto something, so decided to prepare for actually building the full app. The first step we took though wasn’t to build a vast backlog of user stories and development tasks but instead to take a step back and analyse what the feedback we’d received during both pre-MVP and MVP meant.

Understanding the feedback

In order to better understand the feedback we had received up to that point we used an Opportunity Canvas and added what we had learned from the feedback sessions in the following boxes:

Users and Customers

We discovered that it’s mostly teams looking to improve the value their product gives to it’s users and understanding the changes needed to be made to do this

Problems

- Issue trackers like Jira don’t really help teams build up a good picture of what they current product does, only what is being worked on and what will be worked on

- Current tooling does not make it easy to show differences betweeen versions, variants and user journeys

- Delivery teams focus on the smaller details but lose sight of the bigger picture

Current Solutions

- It was around this point that we started to think about where we’d fit into the greater ecosystem as we could have gone in two directions and built either an ideation tool or a documentation tool.

- We realised that by building a documentation tool we’d be able to compliment the user’s existing tools

How will people use your solution

- There was a strong leaning towards embedding maps into documentation which helped influence our decision to focus on documentation instead of ideation

- However the tool would provide value during that ideation process by allowing users to import maps into other tools and as a tool to view the product through different lenses in order to explore ideas

Adoption strategy

- We used the integration feedback points here to build out a list of ways we could integrate the tool into different existing tools customers would be using to make it more ‘sticky’

User metrics

- We decided to stick with interviewing users for the time being as this was the most effective means of understanding what was preventing users from buying into the idea

Business Challenges

- The biggest challenge is our size, we have one developer (me) and we’re going to be in the same market as Miro, Google Jamboard, Figma’s FigJam and Atlassian who could be working on similar functionality at any time and deliver it quicker, to a much larger scale and have the social proof that comes with an enterprise tool

Budget

- Our time outside of work (and for Kev raising a child) is basically our budget

Business Benefits

- Teams will deliver with less re-work and to a higher quality thanks to improved shared understanding

- Analysis of new ask will be smoother as there will be less duplication of effort thanks to a persistent artifact

- Product Owners will be able to see development data in the context of the user journey map, allowing them to make more accurate prioritisation calls based on the level of bugs, technical debt and uncertainty

- Defects will be easier to triage and root cause analysis sessions will be easier to carry out due to improved visibility of the product’s functionality

- Operational support teams will have access to higher quality information that they can use to train support staff and diagnose issues with and use versions to support multiple releases and territories

- Team estimates will be more accurate as they can explore the impact and dependencies of changes

- Improvements to team velocity and better decision making will mean projects and product incremements can be released sooner

Once we’ve completed the opportunity canvas we were really energised as we’d been able to re-focus our vision after being distracted by the different feature requests that came in and we’d been able to carve out a niche for the product so we didn’t have to compete directly with Miro.

We transferred that energy into a development plan and then realised how much work was ahead of us.

Building the reciprocal.dev editor

As we had a lot of valuable feedback from the MVP and its example map I decided to develop the editor in the same code base so we could continue to get that feedback, using a feature toggle to turn on ‘edit mode’ that would allow the map to be edited and another feature toggle to load an empty map.

Once I was able to build the edit mode without impacting the read only map I started working on designing how the editor would work in a similar manner to how we’d worked previously, building mockups to get initial feedback before adding those to the MVP and getting feedback as I built it.

Unfortunately at the same time I was designing the editor I was also thinking about how to implement the editor & how the data behind the app would be modelled and as a result of doing too many things I forgot that most important lesson of prioritising feedback.

In a bid to get some control over the overwhelming complexity involved in building an editor I felt like the right thing to do was to tackle the hardest part (for me anyway) first, which was the data model work.

It was not the right thing to do.

I sank a month into designing and building up a data model library based on the functionality we had originally planned following our post-MVP session and while it was an elegant solution to that set of problems it was long, painful work and in the long run a waste of time.

During one of our weekly catch ups Kev, in response to me expressing how boring data modelling work is pointed out that we’d lost our feedback loop and that maybe talking to people again would be a nice break and allow us to understand the user’s needs from the editor instead of working off assumptions.

Sure enough, during the first sessions I had to discuss the editor and what that might look like, and the majority of the concepts that were in that original data model either never came up or were confusing for people. After a couple of iterations over the editor designs the concepts in the data model I had been struggling with had been removed due to being too complex and over-engineered for users to see any value in.

After a number of further iterations the editor’s functionality and UX matured but there was still one problem we were facing which was summarised perfectly by one piece of feedback we had — “You tell me that versions are a key concept of the app but I can’t see that because it’s hidden under a menu”.

In order to have some conformity between the interactions the user has with the app I had put versions, user journeys and layers under menu items in a toolbar, but this uniform approach actually made it harder for the people to interact with the version functionality because the version is used in combination with the other tools and is updated a lot as users compare map versions.

Once I had solved this problem around selecting a version in the editor, both the editor and the read-only MVP map felt far more efficient as you could have both the user journey list open and the switch version at the same time making it easier to compare user journeys and test cases across different versions.

With the UX now in a good place I started to build the editor up and with the removal of the complexities I had previously introduced to the data model it became a lot easier to work with. This made developing the editor’s functionality a lot simpler so I was able to make good progress on this soon after.

After another month I had all the core functionality of the editor implemented but I wasn’t happy with the UI as it wasn’t consistent so I spent some time redesigning the UI library I had built for creating mockups to cater for all the new interactions that the editor had added. Once I’d implemented those design changes the extra layer of polish made everything feel more ‘real’ and I was ready to start the process of taking a cut ready for an alpha offering.

If you’re interested in the more technical aspects of building the editor with Konva here’s a list of blogs I’ve posted as I’ve been developing it:

- Adding Zoom and Pan to the map

- Adding a Mini-Map

- Adding Drag and Drop to add objects to the stage

- Creating Connections between entities

- Building an Editable SVG Path

Introducing scope creep

Originally we had planned to launch an alpha, providing no backend for users to save their data outside of their browser (saving to local storage instead) and from a technical stand point we had that functionality but as I thought more about the goal we were trying to achieve with the alpha I felt like we were missing an opportunity to engage with users and get feedback from them.

If we’d launched as planned and saved the map data to local storage the only means we would have had to gather feedback would have been to rely on a button in the UI and the kindness of people using the app to send us an email and there’s a potential that a lot of that feedback would have been about how there’s no way to save a map so it can be used on a different machine.

We had the work to save data to a backend and an account system scheduled for the beta so I decided to pull that forward to be included in the alpha so we could remove any possible complaints around saving maps but also the requirement to have an account to edit a map meant we could prompt users to consent to a user interview when they signed up for an account which would be a more effective means of collecting feedback.

While this scope creep aligned with our goals of being driven by user feedback it did delay the release of the alpha by another month as new screens needed to be added and whole new apps needed to be created for managing the user’s account, however I think in the long run it was better to tackle these sooner as there were a number of problems that arose that would have been inconvenient to retroactively add once people were actively using the product.

After completing the account and backend work the alpha was in a really good state and we started looking at the marketing side of launching the alpha.

Content is King

Around the same time that I was finishing off the editor Kev had started to pull together a plan for what we needed to have in place on the website in order to get people interested in signing up for the alpha, as well as the legal notices we needed.

We encountered a bottle neck on this front though as I had originally built the MVP site in Gatbsy by hand coding the HTML for the page and this meant that in order to update the site with Kev’s content we’d need me to make the changes. To unblock Kev we started looking at a CMS solution for Gatsby and settled on GraphCMS.

Kev spent some time modelling the different content blocks in GraphCMS we needed for the site and adding the content ready for me to hook up Gatsby to pull in the data via GraphQL and generate the pages and content.

Once I was feature complete on the editor I moved from building static mockups and started building some motion graphics for a set of tutorial videos that would support the written documentation and not having touched After Effects since I left university in 2009 it was quite enjoyable to revisit it.

And we’re live!

After 8 months of learning and hard graft I’m really excited that the Reciprocal.dev alpha is now ready for everyone to use.

You can register for a free account at https://reciprocal.dev/account and we’d really appreciate it if you’d check the user interview consent box during sign up so we can get your feedback on how you found working with the editor.

As we’re in alpha there are some limits on the number of maps and versions that you can create in order to keep costs low. Accounts are limited to creating 3 maps and each map is limited to 10 versions although you’ll be able to delete maps and versions should you meet these limits.

We really hope that Reciprocal.dev helps your team build shared understanding and you see the benefits of having a persistent interactive user journey map.